Last week, I grumbled about Apple’s “vision” which is visionless at worst and horribly dystopian at best. What could have been different? I found myself imagining what WWDC23’s big announcement might have been if Steve Jobs were still living.

Let’s join Jobs’ keynote, already in progress:

We do have… one more thing. The rumor mill has been going crazy, they think they know what we’re going to release. Clicks to the next slide that shows a collage of headlines about Apple releasing an augmented reality headset.

We’ll give credit where credit is due: we are going to introduce something new today, but it isn’t going to be what they imagine. Clicks to slide of a knight’s helmet with an Apple logo stamped on it.

The rumor mill gets fixated on what they know is possible — things like “Augmented Reality” — but here at Apple we don’t ask, “How can we make the best possible version of existing technology?” We ask, “What is the problem that needs to be solved and how can we do it?”

When people talk about “Augmented Reality” what they really are wanting is “Ambient Computing.” We all know how influential technology is in our lives and we all want our technology better integrated into our lives.

Clicks to famous slide with street sign saying, “Humanities and technology.” Here at Apple, we have been talking for years about the convergence of the humanities with technology, because we believe that technology brought into our everyday lives can be transformative and enable us to be even better at the passions that drive us.

Clicks to picture of the first Mac. When we introduced the original Macintosh, we introduced a computer that was focused on familiar concepts rather than obscure jargon. Clicks to a picture of a colorful iMac G3 and an iPod. A couple of decades later, we introduce the iPod and the digital hub on the Mac. Clicks to a picture of other circa-2000 music players. Our competitors made absurd music players that drew the focus to the device, we made a device that virtually disappeared into the songs and made music easily accessible to purchase in digital form for the first time.

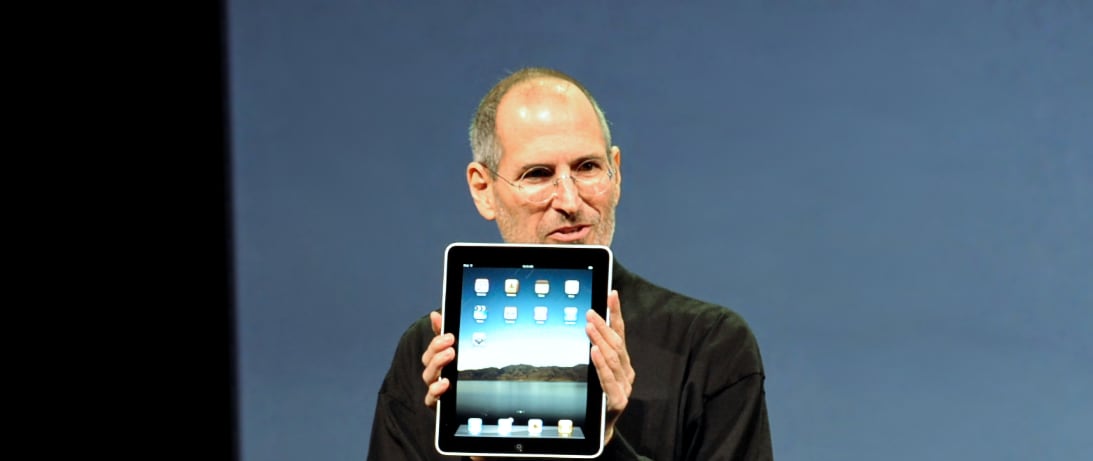

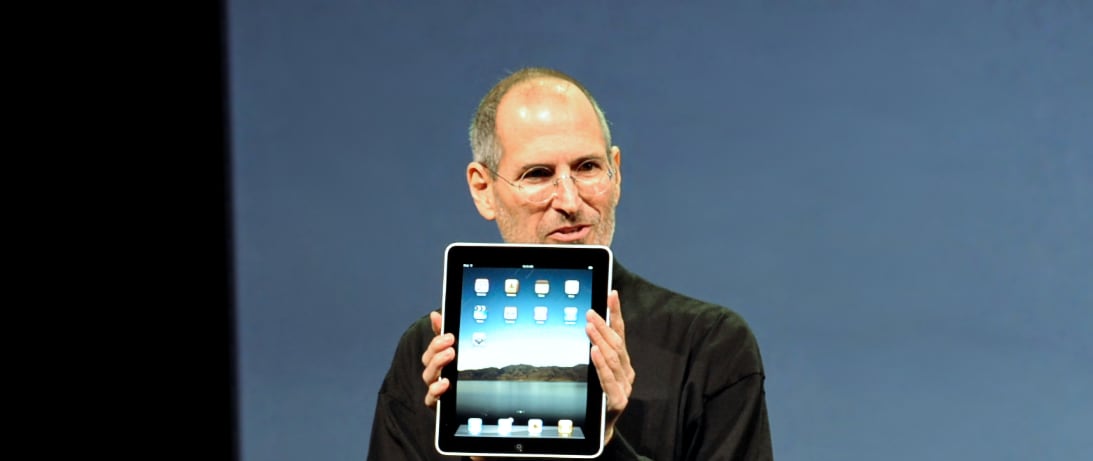

The iPod was just the start. With it, we laid the first stone for what we could accomplish when we brought our unique integration and vision for human focused technology to a mobile device. Clicks to photo of iPhone. When I introduced the iPhone to you, I said we were introducing an iPod, a phone, an Internet communicator. A device that brought the power of the Mac into the palm of your hand and used the most natural input device to give input: fingers.

Today, Apple is going to do it again as only we can do as we make a breakthrough in ambient computing. *A dramatic video of… a home with various Apple products plays. *

I’m proud to introduce Apple Home. We’ve been building this platform right before you for years. You want to be able to access your photos, games, music — your digital life — in the place you live your life. Brings up knight helmet with Apple logo again. You don’t want to put a helmet on to experience any of it.

That’s just stupid.

We built the iPhone on the unique integration only Apple could achieve, using all the progress we made with the iPod. We’re going to do it again with Siri, the HomePod and every Apple device you already own. Apple Home is the digital hub we’ve been preparing for years.

Remember when we dreamed of technology being like this? Plays a clip of Star Trek: The Next Generation where Captain Picard taps a large touch screen PADD and then says, “Computer, display the video on the Bridge.” We like that! Technology should get whatever we want to wherever we want it in the way we want to do it. Computers should think like us, not make us think like them.

How’s that promise being realized today? Plays a video of a person saying “Alexa, play Lavender Haze by Taylor Swift.” Nothing happens. The person repeats the command. The camera pans to an Echo device that says, “Lavender is a small aromatic evergreen shrub of the mint family, with narrow leaves and bluish-purple flowers. Lavender has been widely used in perfumery and medicine since ancient times.” The person, irritated, says, “Alexa, I want the song, not the definition.” Stars and Stripes Forever begins playing.

Let’s just say there’s some room for improvement. Audience laughs. We are already lightyears ahead of our competition with the HomePod, a truly revolutionary speaker that brings the best digital assistant, Siri, together with the best speakers we’ve ever produced, and we think you’ve ever heard. But the problem is that most people only have a HomePod or two in a few places in their home and the commands are still limited.

We can do even better.

Asking for things we want or need using voice is perhaps the most natural interface we know, if only we could forget commands and just converse. The latest advances in machine learning can help by making Siri even more powerful and better able to do everything from understanding what song we want to play to helping us compose an important letter.

But machine learning comes at a cost. It needs a lot of power to do this kind of conversational interaction, so our competitors send the requests off to their own computers to be processed. Not that they would keep the information or use it in any way that would bother us, right? Everyone laughs.

Do we really want to bring machine learning that sends everything in our homes to just anyone? Clicks to picture from the 1984 Apple commercial, but with Mark Zuckerberg as the Big Brother figure. At Apple we’ve solved a lot of this dilemma by incorporating Neural Engines into our latest iPhones, iPads and Macs, but what if that applied to the whole home?

Apple Home is a breakthrough because we have totally rethought the smart home in a way no one else even can. We’re going to take the power of our Apple silicon and the various Apple devices most homes have and make them work together like the instruments of a beautiful orchestra.

Years ago, we introduced Xgrid to spread a complicated task across Macs. Lots of businesses have thousands of computers to, say, render a movie. Clicks to a picture of the Toy Story character Woody. But what do we have in our homes now? A collection of devices that are often sitting idle.

No one else has the digital ecosystem we have here at Apple. We asked ourselves what if we took every one of those devices signed into an individual iCloud account and spread tasks at home between them? We can offer the best AI on the planet without a single byte of our data being shipped outside of the home. We call it Home School and we’re excited to demonstrate it at this week’s WWDC workshops so you can build incredible apps with it.

Our competitors envision the future as something like this. Clicks to a slide of a Vision Pro like visor on a dad making breakfast like the one shown at WWDC23 with his daughter looking sad. Are we going to do one of these? Nah. We think that’s isolating from the people who matter the most to us.

We’re going to be introducing a new line of Apple Home devices that use Home School to offer incredible speed, industry leading privacy and constant availability without turning us into cyborgs. First, I want to show you how it all comes together with the new Apple HomeScreen.

Walks over to a kitchen counter set. “Siri, compose an e-mail to Phil to see if he’s available for tacos at one of the places I like to get tacos for soon as I’m available today.” A camera pans to a 10” smart display as Siri says, “How does this look?” “To: Phil Schiller. Subject: Tacos Today. Hey, Phil, I’m free after the keynote today, want to get tacos at the Taco Shed at 2 p.m.? Steve”

It’s like magic. Apple Home has been processing my e-mails, texts and other information — all using Home School machine learning to keep my privacy safe. Apple Home knows how I sound, so it knows which Phil I would want to write, it knows where I usually go for tacos and it knows my schedule. Boom.

Of course, at times, I may want to tweak what it assumed. No problem. “Siri, I want to send this to Phil Smith instead.” See how it automatically changes the tone to how I sound when writing the other Phil? Magic.

Not a single drop of this is being sent off to some company’s server. Our Apple commitment to privacy is stronger than ever. Using Home School, all my devices are using their idle time or charging time to process information, to understand exactly what I’m going to want it to do, and all of it is end-to-end encrypted. So, Apple Home. It can know you better than any other “artificial intelligence” on the planet — really seems intelligent and buttery smooth in its delivery, doesn’t it? All that information is kept safe and secure and is only accessible to you.

The demo continues. Steve wows the audience with how he can share pictures of a trip to friends by just describing what is in them; play music using Spatial Audio that leverages the new HomeScreen, various HomePods including tiny ones primarily made to ensure Siri is ambiently everywhere and generally do big and small computing tasks — even composing sophisticated presentations — using machine learning and voice.

All that may be a fanciful exercise, but unlike the Vision Pro, none of it is farfetched. If Apple were to have doubled down on some past efforts, kept pace with companies like Open AI and Google on “generative AI” and understood that smart assistants need to be in a variety of sizes to be useful (not just in the place where one puts a Hi-Fi), this could have been WWDC23.

Apple has pushed grid computing that leverages multiple devices in the past, and still does for professional tools like Compressor. Apple does have numerous devices in most of its customers’ homes that are frequently idle and could be used for machine learning acceleration to provide better-than-ChatGPT machine conversations without violating privacy.

It really comes down to will. Perhaps Vision Pro is easier to explain (though not to sell, given the price). Perhaps Tim Cook wanted a bigger “tent pole” product than Apple Watch he could call his own rather than it belonging to Jobs. But Jobs was the master at iterating products until suddenly they “magically” did what he envisioned and wowing people by unleashing them when everything was truly ready.

Offering a wider range of HomePods as “the interface” — from cheap, “just listening for command” type ones equivalent to the Echo Flex to screen based ones — and tying everything together with Mac and iOS/iPadOS feels like the realization of Jobs’ vision of the Digital Hub. It would also perfectly realize the emphasis on “just as good without violating your privacy” sensibility of the Cook era at Apple.

It’d be a perfectly Apple vision. Alas, we just have Vision Pro instead.

Full Disclosure: Tim does own some Apple stock (AAPL).

Timothy R. Butler is Editor-in-Chief of Open for Business. He also serves as a pastor at Little Hills Church and FaithTree Christian Fellowship.

You need to be logged in if you wish to comment on this article. Sign in or sign up here.

Start the Conversation